A growing body of research is pushing paraconsistent logic — a once-niche branch of philosophical logic that allows reasoning in the presence of contradictions — into the mainstream of artificial intelligence design. As large language models and autonomous agents grapple with conflicting information sources, computer scientists and logicians are increasingly turning to non-classical logical frameworks to keep AI systems from collapsing under the weight of inconsistency.

Why Contradictions Break Classical AI

In standard classical logic, the principle known as ex contradictione quodlibet — “from a contradiction, anything follows” — means that if a system holds even a single pair of contradictory statements, it can technically derive any conclusion at all. That property, called the principle of explosion, is catastrophic for automated reasoning systems that ingest data from messy, real-world sources. Wikipedia and news archives, scientific databases, and crowd-sourced knowledge bases all routinely contain contradictions, yet AI systems are expected to function reliably despite them.

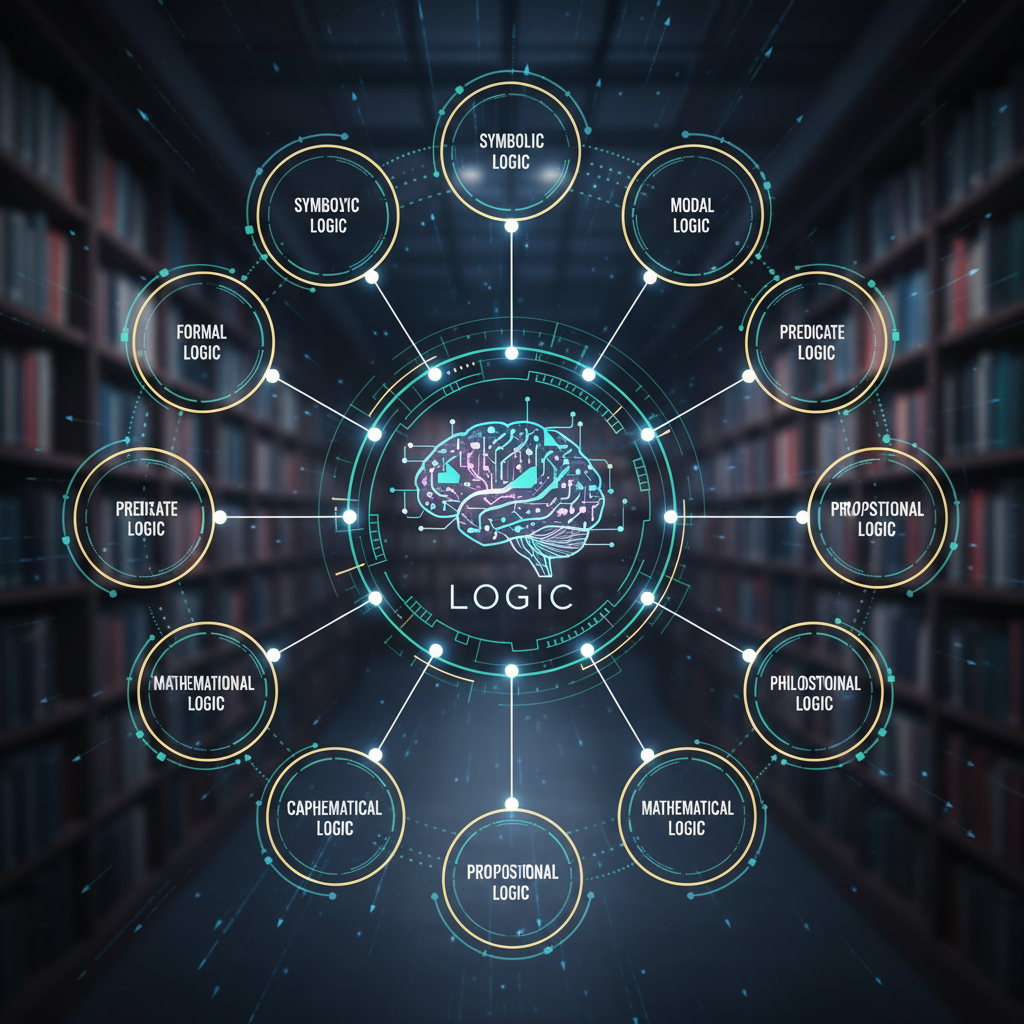

Paraconsistent logic, first formalised by Polish logician Stanisław Jaśkowski in the 1940s and later developed extensively by Brazilian philosopher Newton da Costa, rejects explosion. It allows a reasoning system to acknowledge that two contradictory statements are present without inferring nonsense from them. For decades the field remained largely the preserve of philosophical logicians, debated in journals such as the Stanford Encyclopedia of Philosophy, but it is now finding fresh purchase in computer science.

From Philosophy Departments to Machine Learning Labs

Recent work presented at logic and AI conferences has explored using paraconsistent frameworks to handle inconsistencies in knowledge graphs, multi-agent systems, and the outputs of large language models. Researchers argue that as generative AI tools are deployed across enterprise and government settings, their tendency to “hallucinate” or to surface contradictory claims drawn from different training sources requires a more sophisticated logical underpinning than classical Boolean reasoning provides.

Graham Priest, the Australian philosopher who has championed dialetheism — the view that some contradictions are actually true — has long argued that paraconsistent approaches are not just technical workarounds but reflect something deep about how reasoning works in practice. His work, surveyed in resources from the Association for Symbolic Logic, has influenced a generation of researchers now applying these ideas to computing. “Inconsistency is a feature of information, not a bug to be eliminated,” Priest has argued in lectures and published interviews, a stance increasingly echoed by AI safety researchers.

Practical Applications Emerging

One area of intense interest is the use of paraconsistent logic in automated theorem proving and formal verification of software, where partial inconsistency in specifications is common. Another is in medical diagnosis systems, which must reconcile conflicting test results, and in legal reasoning tools, where contradictory precedents are the norm rather than the exception.

Brazilian universities, building on da Costa’s foundational work, remain centres of paraconsistent research. The University of São Paulo and the State University of Campinas have produced ongoing studies linking paraconsistent annotated logic to robotics and decision-making systems. Meanwhile, European groups working under projects funded by bodies such as the European Research Council have explored how paraconsistent frameworks might support trustworthy AI under the EU’s emerging regulatory regime.

Significance for AI Safety and Governance

The renewed attention matters because regulators worldwide are demanding that AI systems be explainable, auditable, and resilient. A model that produces wildly different outputs when fed slightly inconsistent inputs fails all three tests. Paraconsistent logic offers a principled way to design systems that degrade gracefully — flagging contradictions rather than silently propagating arbitrary conclusions.

Critics caution that adopting non-classical logics introduces its own complexities, including computational overhead and the philosophical challenge of explaining results to users accustomed to binary true-or-false reasoning. There is also no single paraconsistent logic; the field comprises dozens of competing systems, from relevance logics to Logics of Formal Inconsistency, each with different trade-offs.

What to Watch Next

Expect to see paraconsistent reasoning move closer to industrial AI pipelines over the next eighteen months, particularly in sectors where contradictory data is unavoidable: healthcare, finance, and intelligence analysis. Conferences such as the World Congress on Paraconsistency, alongside mainstream venues like NeurIPS and IJCAI, are likely to host more cross-disciplinary sessions bridging logicians and machine learning engineers. Whether the broader AI industry embraces these tools — or sticks with classical workarounds patched onto neural systems — will be one of the more interesting structural questions in machine reasoning over the coming years.

For more deep dives into logic, AI, and the philosophy of reasoning, visit and explore related coverage at science.wide-ranging.com.